Tokenization in Data Security: A Strategic Guide for 2026

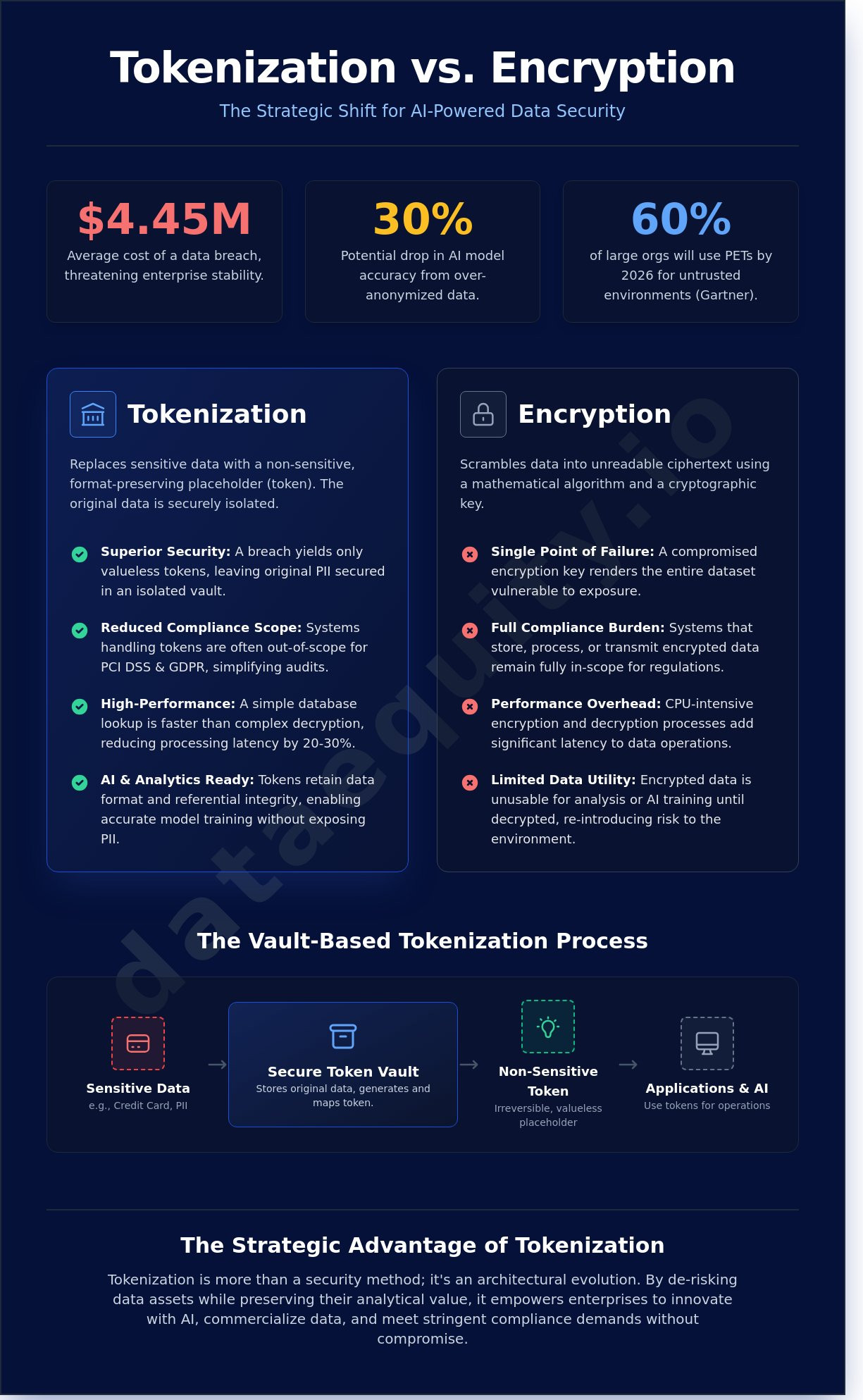

By 2026, traditional encryption will likely be viewed as a legacy bottleneck for any enterprise attempting to scale AI operations, especially since Gartner predicts that 60% of large organizations will use privacy-enhancing techniques to handle sensitive data in untrusted environments. You've likely faced the 30% drop in model accuracy that occurs when data is over-anonymized or the compliance fatigue that stems from managing overlapping GDPR and PCI DSS requirements. It's a common paradox; you must protect your data to avoid the average $4.45 million cost of a breach, yet the more you lock it down, the less value you can extract for commercialization or predictive analytics.

Implementing tokenization data security resolves this conflict by replacing sensitive values with non-sensitive placeholders that retain their original format and mathematical utility. This guide will show you how to build a strategic framework that secures assets while preserving the integrity required for AI training, valuation, and secure data sharing. We'll examine the specific architectural shifts necessary to transform your data into a liquid asset, allowing you to meet regulatory demands without sacrificing the precision of your machine learning models.

Key Takeaways

-

Understand the fundamental shift from traditional protection to a vault-based architecture that replaces sensitive assets with non-intrinsic placeholders to minimize risk.

-

Analyze the performance advantages of vault-based systems over encryption to optimize data retrieval speeds in high-scale enterprise environments.

-

Learn the methodology for transforming restricted PII into compliant, high-value datasets ready for AI training and strategic commercialization.

-

Master the strategic implementation of tokenization data security to protect sensitive information while maintaining the logical structure required for advanced business intelligence.

-

Discover a structured path from automated data discovery to precise asset valuation using on-premise agents and integrated reporting tools.

What is Tokenization in Data Security?

Tokenization is a rigorous security methodology that replaces sensitive data elements with non-sensitive equivalents, known as tokens. These tokens possess no intrinsic or exploitable value outside of a specific, controlled ecosystem. Unlike encryption, which relies on reversible mathematical algorithms to obscure information, tokenization describes a structural process where the relationship between the original data and the token is stored in a secure, isolated database called a Token Vault. This vault serves as the singular map required to retrieve the original information. By 2026, Gartner predicts that 60% of large enterprises will implement tokenization data security as a mandatory pillar of their Zero Trust architecture to mitigate the risks associated with expanded API and AI attack surfaces.

The strategic value of tokenization lies in its ability to reduce the scope of compliance audits, such as PCI DSS or GDPR. When an organization replaces raw data with tokens, the systems that handle those tokens are no longer considered in scope for many security regulations. This shift doesn't just improve security; it optimizes operational costs by narrowing the focus of defensive resources. In a 2024 analysis of data breach costs, organizations utilizing robust data abstraction techniques saw a 22% reduction in the total financial impact of unauthorized access events.

The Anatomy of a Secure Token

Security architects categorize tokens based on their utility and the level of risk they manage. High-value tokens (HVT) act as permanent surrogates for sensitive data in transactions, whereas low-value tokens (LVT) might only serve as temporary session identifiers. To maintain database integrity, many implementations utilize format-preserving tokenization. This ensures a token for a 16-digit credit card number remains a 16-digit string, allowing legacy systems to process the data without expensive schema modifications. The security of these tokens relies on non-mathematical mapping. By using randomization instead of an algorithm, the system ensures that an adversary cannot derive the original value from the token itself, regardless of the computing power applied.

Tokenization vs. Lexical Analysis

It's vital to distinguish tokenization data security from the lexical analysis used in Natural Language Processing (NLP). In NLP, tokenization is the act of breaking sentences into smaller linguistic units for machine learning analysis. In the context of security, it's a deterministic process where the same input yields the same token within a specific vault to ensure data consistency across distributed systems. Reversing a security token is impossible without authorized access to the Token Vault. This creates a hard barrier for attackers. If a breach occurs within an AI training set, the threat actor gains access to meaningless strings rather than actionable personal identifiers. This distinction is a technical requirement for meeting the robustness standards outlined in the 2024 EU AI Act.

Tokenization vs. Encryption: Which Should You Choose?

Deciding between encryption and tokenization requires a clear understanding of their underlying architectures. Encryption relies on sophisticated mathematical algorithms to scramble data into ciphertext; it's a process that requires a cryptographic key to reverse. If an attacker gains access to that key, the entire data set becomes vulnerable. In contrast, tokenization data security operates by replacing sensitive information with a non-sensitive surrogate, known as a token. This token has no intrinsic value and cannot be mathematically reversed. The original data resides in a highly secure, centralized vault, separate from the primary application environment.

Performance metrics often favor tokenization in high-volume API environments. Encryption is CPU-intensive, as every read and write operation requires complex calculations. Systems that transition to tokenization frequently see a 20% to 30% reduction in processing latency because the system only needs to perform a database lookup rather than a decryption routine. For AI training, this distinction is critical. Tokens allow models to identify referential patterns and relationships within datasets without ever exposing Personally Identifiable Information (PII) to the model's weights or the training environment.

Risk management profiles also differ significantly. With encryption, the data itself is the target, and the key is the single point of failure. According to the 2023 IBM Cost of a Data Breach report, the average cost of a breach reached $4.45 million, often exacerbated by poorly managed keys. Tokenization shifts the risk; even if a tokenized database is compromised, the attacker acquires only meaningless strings of characters. The actual value remains locked in the vault, which is easier to isolate and monitor with rigorous access controls.

When to Use Encryption

Encryption remains the standard for protecting unstructured data, such as internal emails, legal documents, and binary files. It's also the primary mechanism for securing data in transit across the open internet via TLS protocols. Organizations often choose encryption for short-term storage where building a dedicated vault infrastructure would be an unnecessary architectural burden. Certain regulatory frameworks specifically mandate end-to-end encryption for specific data types, making it a non-negotiable compliance requirement.

When Tokenization Wins

Tokenization is the superior choice for structured data like credit card numbers, Social Security numbers, and healthcare IDs. By using tokenization data security, businesses can achieve a 95% reduction in PCI DSS audit scope, as the sensitive data never touches the merchant's local servers. This approach also enables secure data marketplaces; companies can share tokenized insights with third parties without risking the exposure of raw assets. To maximize the value of your information architecture, it's vital to integrate these methodologies into a broader data strategy that balances security with operational agility.

The Tokenization Process: How It Works in Enterprise Environments

Implementing effective tokenization data security requires a methodical lifecycle that transforms sensitive information into non-exploitable assets. This process isn't a simple swap; it's a structured architecture designed to bridge the gap between raw data utility and rigorous compliance standards. In a 2023 study, it was found that 68% of enterprise data remains unmanaged, making a systematic approach to tokenization essential for risk mitigation.

-

Step 1: Data Discovery: The system identifies PII and PHI across the network. Organizations can't protect what they can't see, and this phase ensures that every sensitive field is mapped.

-

Step 2: Token Generation: The engine creates a unique, randomized identifier. This token has no mathematical relationship to the original value, rendering it useless to attackers.

-

Step 3: Vaulting: The original sensitive data is moved to a highly restricted, hardened environment. This vault is typically the only place where the relationship between the token and the data exists.

-

Step 4: Application Integration: Raw data is replaced with tokens in downstream systems. AI models and analytics platforms process these surrogates, ensuring that sensitive values never enter the computation layer.

-

Step 5: De-tokenization: This is the controlled retrieval process. Only authorized users or specific API calls can swap a token back for its original value, with every request generating a forensic audit log.

Secure Discovery and Metadata Analysis

Visibility is the foundation of any data strategy. On-premise agents allow organizations to identify sensitive assets without moving them across the network, which significantly reduces the attack surface during the initial phase. A discovery agent is a tool that analyzes metadata locally to prevent data leakage. By focusing on metadata cataloging, businesses can build a comprehensive data map. This ensures that 100% of sensitive information is identified before the tokenization data security protocols are even applied.

Format-Preserving Tokenization (FPT)

Legacy systems often require specific data formats to function correctly. For instance, a payment processing system might expect exactly 16 digits for a card number. FPT allows the token to mirror the original data's format and length. This is a critical efficiency gain; it eliminates the need for database schema changes, which can cost upwards of $20,000 per application in refactoring time. It's a balance of operational continuity and security. You maintain the system's logic while ensuring the sensitive plaintext remains isolated from the application environment.

Tokenization for AI and Data Commercialization

Modern AI development faces a structural bottleneck. While 90% of enterprise data remains untapped for machine learning, strict privacy regulations like the GDPR and the CCPA restrict the movement of raw personally identifiable information (PII). Tokenization resolves this conflict by decoupling the data's utility from its sensitive identity. By implementing tokenization data security, organizations transform high-risk databases into AI-ready assets that retain their statistical significance without exposing individual identities. This process ensures that the underlying patterns, correlations, and weights remain intact for model training, which is critical since the 2023 Gartner Strategic Technology Trends report suggests that 60% of data used for AI will be protected by privacy-enhancing technologies by 2024.

Tokenization in the AI Marketplace

Sellers can list datasets on a marketplace where the raw values remain in a secure vault. This architecture eliminates the trust gap between parties. Potential buyers perform pre-qualification through metadata analysis and statistical summaries rather than viewing raw records. Data Equity's AI-driven marketplace utilizes this framework to facilitate "Zero-Knowledge" transactions. Sellers maintain 100% control over their underlying records while providing enough mathematical evidence to prove the dataset's quality. This method allows for a rapid "Evidence-Based Assessment" of data assets, reducing the evaluation cycle from months to days. It's a shift from speculative data sharing to a structured, audit-ready commercial pipeline.

Valuing Tokenized Assets with DataVault

Data isn't an abstract resource; it's a balance sheet asset. The DataVault methodology uses deterministic valuation engines to assign a specific financial weight to datasets, even when they're tokenized. This process enables a precise calculation of intrinsic value based on factors like rarity, completeness, and utility for specific AI use cases. By integrating tokenization data security into the valuation process, compliance shifts from a cost center to a revenue driver. Organizations finally issue data valuation reports that satisfy both auditors and investors. This turns protected information into liquid capital, allowing firms to leverage their data for financing or strategic partnerships without compromising privacy. The result is a transparent ecosystem where security and profitability coexist through rigorous technical validation.

Discover how to quantify your data's market value through secure tokenization.

Implementing Tokenization with Data Equity

Securing data isn't just about building walls; it's about defining the financial value of the information inside them. Data Equity provides a structured path for enterprises to transform raw datasets into liquid assets through a rigorous methodological framework. Our process begins with the deployment of an on-premise agent. This tool facilitates secure discovery by analyzing metadata and structural patterns directly within your local environment. Your sensitive records never leave your firewall. This architecture addresses the core challenge of tokenization data security by maintaining strict data sovereignty while preparing the ground for valuation.

Once discovery is complete, the system generates a DataVault report. This document moves beyond technical specs to provide a concrete valuation based on market demand and data quality. It's a strategic shift from viewing data as a storage cost to recognizing it as balance sheet capital. By the end of 2024, industry analysts expect 30% of large organizations to use internal data marketplaces to streamline this exact transition. Data Equity facilitates this through a secure, AI-driven marketplace where pre-qualified buyers can bid on tokenized assets without ever accessing the underlying raw datasets.

The Data Equity Advantage

The advantage lies in the fusion of security and quantification. We don't just protect data; we price it. The on-premise analysis ensures that your intellectual property remains under your control. By accessing our network of pre-qualified buyers, you bypass the traditional risks associated with data brokerage. Every transaction is governed by smart contracts and granular access controls, ensuring that your tokenization data security protocols remain intact throughout the lifecycle of the asset. Our methodology reduces the "time-to-value" for data monetization by 40% compared to traditional consulting approaches.

Get Started with a Data Assessment

Requesting a DataVault report is the first step toward monetization. This assessment provides the transparency needed to justify investment in advanced data governance. You can integrate these tokenized data flows into your existing SaaS dashboard, providing real-time visibility into asset performance and security status. It's a plug-and-play solution for the modern, data-driven enterprise. Organizations that quantify their data assets see a 15% increase in operational efficiency within the first 12 months of implementation. Take control of your digital capital now.

Request your DataVault valuation report today and begin the journey from data protection to profit generation.

Advancing from Compliance to Capital Realization

Implementing tokenization data security isn't merely a defensive maneuver; it's a structural requirement for participating in the 2026 data economy. As organizations move toward AI-driven commercialization, the distinction between static encryption and dynamic tokenization becomes the difference between locked silos and liquid assets. Industry benchmarks from the PCI Security Standards Council highlight that tokenization can reduce compliance overhead by up to 80% for enterprise environments. This efficiency allows teams to focus on high-value analytics rather than administrative maintenance.

Data Equity provides the methodology to bridge the gap between technical protection and financial realization. Our AI-driven deterministic valuation engine assigns precise worth to your datasets, while our on-premise discovery agent ensures zero-leakage security throughout the identification phase. You'll gain direct access to pre-qualified buyers in the Data Equity marketplace, transforming security costs into measurable revenue streams. Unlock your data's monetary value with a DataVault report today. Precision in security creates the certainty required for high-stakes commercial growth.

Frequently Asked Questions

Is tokenization more secure than encryption?

Tokenization is inherently more secure for specific use cases because it removes the mathematical relationship between the original data and its surrogate. While encryption uses an algorithm to scramble data, tokenization data security relies on replacing sensitive info with a random string. This means a breach of the database yields no exploitable data without access to the separate, isolated vault.

Does tokenization reduce GDPR and PCI DSS compliance scope?

Tokenization significantly reduces the scope of PCI DSS compliance by removing sensitive cardholder data from the local environment. According to the PCI Security Standards Council, organizations can reduce their audit scope by up to 90% by ensuring sensitive data never enters their internal systems. Under GDPR, tokenization serves as a robust pseudonymization technique that simplifies right-to-be-forgotten requests.

Can tokenized data be used for AI model training?

Yes, tokenization data security protocols allow for AI model training while maintaining strict privacy standards. By using format-preserving tokenization, developers can retain the structural integrity and statistical properties of the dataset without exposing PII. This approach enables data scientists to build accurate predictive models while ensuring that 100% of the raw sensitive data remains isolated from the training environment.

What is a token vault and how do I secure it?

A token vault is a centralized, highly secure database that stores the mapping between original sensitive data and its corresponding tokens. Securing it requires a multi-layered defense strategy including hardware security modules and strict role-based access controls. Implementing 24/7 monitoring and automated alerts for unauthorized access attempts ensures the vault remains the single point of truth within a zero-trust architecture.

How much does it cost to implement enterprise tokenization?

Implementation costs vary based on volume and architecture; industry benchmarks from Gartner indicate that initial deployment for mid-sized enterprises typically ranges from $50,000 to over $250,000. These figures include integration fees, vault infrastructure, and maintenance. Companies often see a return on investment within 18 months through reduced compliance audit fees and lower cyber insurance premiums.

What happens if a token is stolen but the vault is secure?

If a token is stolen while the vault remains secure, the attacker gains no usable sensitive information. Since a token is a non-mathematical surrogate, it's worthless outside the specific ecosystem where the vault resides. This containment strategy limits the blast radius of a breach to the tokens themselves; administrators can invalidate or rotate them instantly without affecting the underlying sensitive records.

Can tokenization be reversed without the original system?

Tokenization can't be reversed without access to the original vaulting system because there's no mathematical link between the token and the data. Unlike encryption, which a sufficiently powerful computer could eventually crack via brute force, a token is a random placeholder. Deciphering it requires direct, authenticated access to the mapping database; this makes it a permanent safeguard against external decryption attempts.

How does tokenization affect database performance?

Tokenization typically introduces a latency overhead of 5 to 15 milliseconds per transaction depending on the vault's proximity and architecture. Modern distributed vault systems mitigate this delay by using edge computing and high-speed caching layers. For high-volume API environments, the performance impact is negligible compared to the computational cost of managing complex encryption keys across multiple microservices.